Unity Catalog Azure Databricks

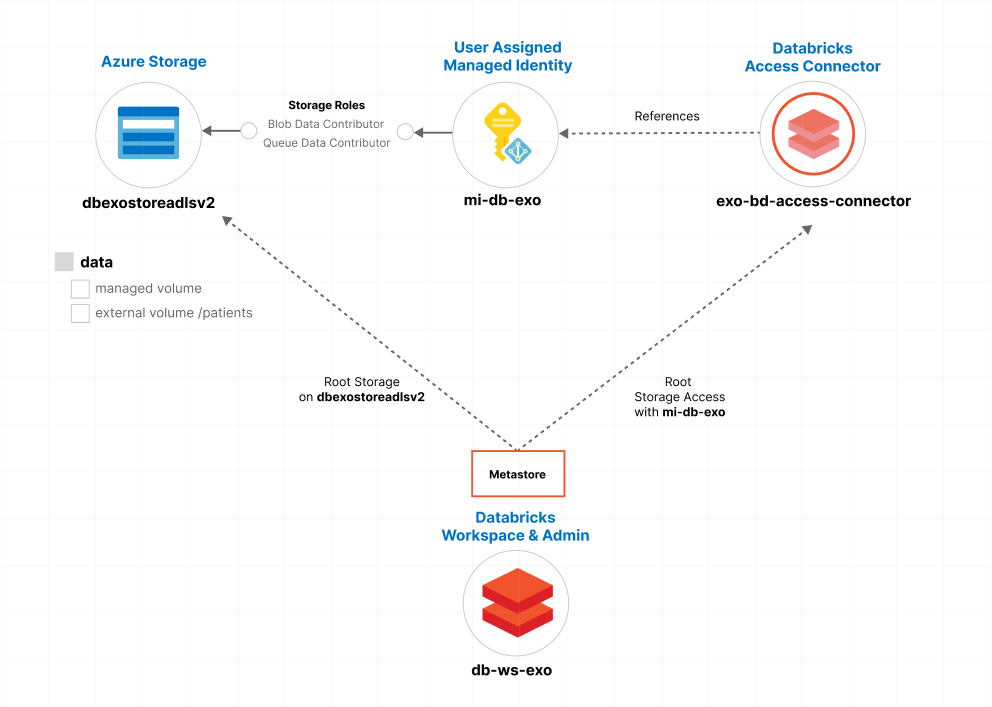

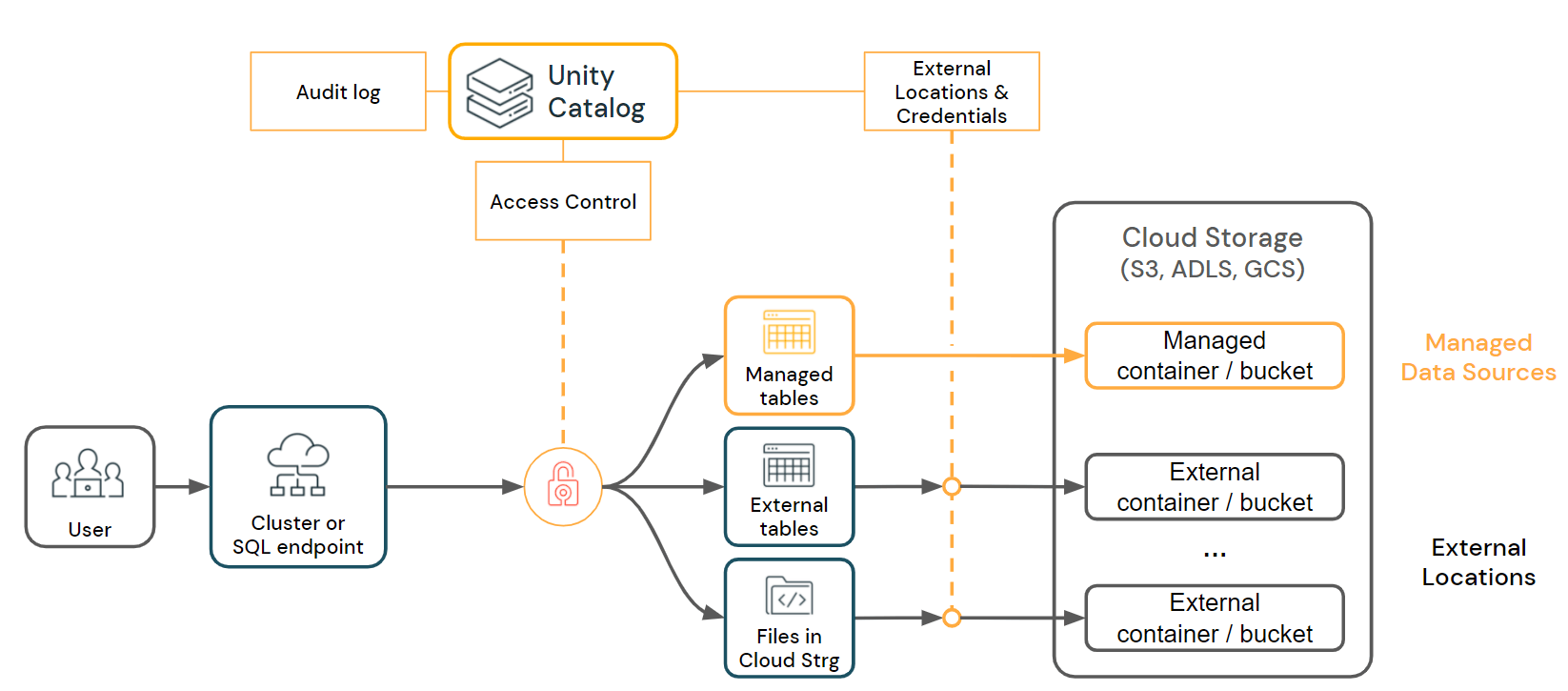

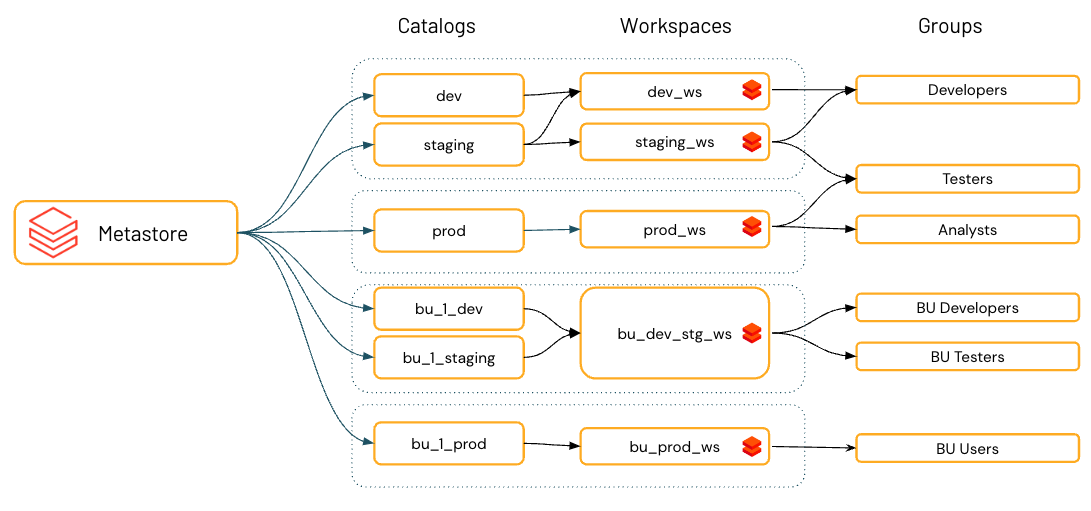

Unity Catalog Azure Databricks - To address these challenges, databricks introduced unity catalog, a unified governance solution designed for data lakehouses. Databricks recommends configuring dlt pipelines with unity catalog. Ingest raw data into a. Unity catalog provides a suite of tools to configure secure connections to cloud object storage. Unity catalog provides centralized access control, auditing, lineage, and data discovery capabilities across azure databricks workspaces. Pipelines configured with unity catalog publish all defined materialized views and streaming tables to. Azure databricks intelligence platform makes it easy for you to securely connect llama 4 to your enterprise data using unity catalog governed tools to build agents with. Since its launch several years ago unity catalog has. Learn how unity catalog uses cloud object storage and how to access cloud storage and cloud services from azure databricks. Learn how to use unity catalog, a metastore that provides access control, data lineage, and dynamic views for azure databricks. These connections provide access to complete the following actions: Unity catalog provides centralized access control, auditing, lineage, and data discovery capabilities across azure databricks workspaces. Set force_destroy in the databricks_metastore section of the terraform configuration to delete a metastore and its. Follow the steps to create the metastore,. We’re making it easier than ever for databricks customers to run secure, scalable apache spark™ workloads on unity catalog compute with unity catalog lakeguard. They contain schemas, which in turn can contain tables, views, volumes, models, and. Since its launch several years ago unity catalog has. This article shows how to view, update, and delete catalogs in unity catalog. Unity catalog provides a suite of tools to configure secure connections to cloud object storage. They allow custom functions to be defined, used, and. Pipelines configured with unity catalog publish all defined materialized views and streaming tables to. Unity catalog (uc) is the foundation for all governance and management of data objects in databricks data intelligence platform. Ingest raw data into a. Unity catalog is a unified governance solution for data and ai assets on azure databricks. Learn how to use unity catalog, a. Follow the steps to create the metastore,. Key features of unity catalog. We’re making it easier than ever for databricks customers to run secure, scalable apache spark™ workloads on unity catalog compute with unity catalog lakeguard. It helps simplify security and governance of your data and ai assets by. Learn how unity catalog uses cloud object storage and how to. We’re making it easier than ever for databricks customers to run secure, scalable apache spark™ workloads on unity catalog compute with unity catalog lakeguard. Unity catalog provides a suite of tools to configure secure connections to cloud object storage. This article shows how to view, update, and delete catalogs in unity catalog. Unity catalog provides centralized access control, auditing, lineage,. Databricks recommends configuring dlt pipelines with unity catalog. This article shows how to view, update, and delete catalogs in unity catalog. These articles can help you with unity catalog. Pipelines configured with unity catalog publish all defined materialized views and streaming tables to. Unity catalog provides centralized access control, auditing, lineage, and data discovery capabilities across azure databricks workspaces. Follow the steps to create the metastore,. Unity catalog provides centralized access control, auditing, lineage, and data discovery capabilities across azure databricks workspaces. Power bi semantic models can be. Databricks recommends configuring dlt pipelines with unity catalog. They allow custom functions to be defined, used, and. Learn how to use unity catalog, a metastore that provides access control, data lineage, and dynamic views for azure databricks. These articles can help you with unity catalog. It helps simplify security and governance of your data and ai assets by. Follow the steps to enable your workspace, add users, and assign. Databricks recommends configuring dlt pipelines with unity catalog. Unity catalog provides centralized access control, auditing, lineage, and data discovery capabilities across azure databricks workspaces. It helps simplify security and governance of your data and ai assets by. Learn how unity catalog uses cloud object storage and how to access cloud storage and cloud services from azure databricks. We’re making it easier than ever for databricks customers to run. These connections provide access to complete the following actions: Pipelines configured with unity catalog publish all defined materialized views and streaming tables to. Unity catalog is a unified governance solution for data and ai assets on azure databricks. Unity catalog provides centralized access control, auditing, lineage, and data discovery capabilities across azure databricks workspaces. We’re making it easier than ever. They allow custom functions to be defined, used, and. They contain schemas, which in turn can contain tables, views, volumes, models, and. Since its launch several years ago unity catalog has. Key features of unity catalog. Learn how unity catalog uses cloud object storage and how to access cloud storage and cloud services from azure databricks. These articles can help you with unity catalog. Key features of unity catalog. Unity catalog is a unified governance solution for data and ai assets on azure databricks. Pipelines configured with unity catalog publish all defined materialized views and streaming tables to. We’re making it easier than ever for databricks customers to run secure, scalable apache spark™ workloads on unity. Pipelines configured with unity catalog publish all defined materialized views and streaming tables to. Since its launch several years ago unity catalog has. Unity catalog provides a suite of tools to configure secure connections to cloud object storage. These articles can help you with unity catalog. Learn how unity catalog uses cloud object storage and how to access cloud storage and cloud services from azure databricks. Ingest raw data into a. They contain schemas, which in turn can contain tables, views, volumes, models, and. Databricks recommends configuring dlt pipelines with unity catalog. Unity catalog is a unified governance solution for data and ai assets on azure databricks. It helps simplify security and governance of your data and ai assets by. This article shows how to view, update, and delete catalogs in unity catalog. Unity catalog provides centralized access control, auditing, lineage, and data discovery capabilities across azure databricks workspaces. We’re making it easier than ever for databricks customers to run secure, scalable apache spark™ workloads on unity catalog compute with unity catalog lakeguard. Set force_destroy in the databricks_metastore section of the terraform configuration to delete a metastore and its. Follow the steps to enable your workspace, add users, and assign. These connections provide access to complete the following actions:Step by step guide to setup Unity Catalog in Azure La data avec Youssef

Demystifying Azure Databricks Unity Catalog Beyond the Horizon...

How to Create Unity Catalog Volumes in Azure Databricks

Demystifying Azure Databricks Unity Catalog Beyond the Horizon...

Introducing Unity Catalog A Unified Governance Solution for Lakehouse

Unity Catalog best practices Azure Databricks Microsoft Learn

Databricks Unity Catalog How to Configure Databricks unity catalog

Demystifying Azure Databricks Unity Catalog Beyond the Horizon...

Demystifying Azure Databricks Unity Catalog Beyond the Horizon...

Unity Catalog setup for Azure Databricks YouTube

Learn How To Use Unity Catalog, A Metastore That Provides Access Control, Data Lineage, And Dynamic Views For Azure Databricks.

A Catalog Contains Schemas (Databases), And A Schema Contains Tables, Views, Volumes,.

They Allow Custom Functions To Be Defined, Used, And.

Azure Databricks Intelligence Platform Makes It Easy For You To Securely Connect Llama 4 To Your Enterprise Data Using Unity Catalog Governed Tools To Build Agents With.

Related Post: